Embedded vs Platform vs Centralised SRE — Which Model Actually Scales?

Published on 23 April 2026 by Zoia Baletska

As systems grow, reliability stops being something a few engineers can “keep an eye on” and turns into a structural concern. Incidents become harder to trace, dependencies less obvious, and small failures start to cascade in ways that weren’t visible before. At that point, the question is no longer how to improve reliability, but how to organise for it.

Teams usually land on one of three approaches: embedding SREs into product teams, centralising them into a dedicated function, or investing in a platform model that shifts responsibility toward tooling and self-service. Each approach can work well, but only under certain conditions—and often only for a while.

How These Models Actually Behave

Embedded SRE

Embedding an SRE into a team tends to produce quick results. The engineer is close to the code, part of planning discussions, and involved in day-to-day trade-offs. Reliability concerns surface earlier, and decisions improve simply because someone is consistently asking the right questions.

This closeness creates momentum. Teams that previously treated incidents as interruptions begin to understand them as signals. SLOs become more than dashboards, and reliability starts influencing how features are designed rather than how outages are handled.

That said, this model carries a hidden constraint. It depends heavily on the number and quality of SREs you can place across teams. As the organisation grows, coverage becomes uneven. Some teams develop strong reliability practices, while others lag behind. Over time, embedded SREs can also become the default owners of reliability work, which quietly undermines the original goal of shared responsibility.

Centralized SRE

A centralised model introduces clarity. Responsibilities are well defined, standards are easier to enforce, and incident management follows consistent patterns. For organisations dealing with compliance or operating in regulated environments, this structure often feels necessary rather than optional.

However, distance changes how reliability is perceived. Product teams may begin to see it as something external, handled by specialists rather than built into their own work. Communication gaps appear, especially when decisions require deep service-level context. While consistency improves, responsiveness to team-specific challenges can suffer.

This trade-off becomes more visible as systems diversify. What works well for one service might not translate cleanly to another, and centralised teams often have to balance standardisation with flexibility—rarely an easy compromise.

Platform SRE

Platform-oriented SRE shifts the focus from direct intervention to enablement. Instead of joining teams or owning reliability processes, SREs build internal systems that make it easier for developers to manage reliability themselves. Observability tooling, deployment pipelines, and SLO frameworks fall into this category.

This approach scales more naturally because it reduces reliance on individual SREs. A well-designed platform can support dozens of teams without requiring proportional growth in headcount.

The challenge lies in adoption. Tools alone do not change behaviour. If teams lack experience or incentives to prioritise reliability, even the best platform can end up underused. In practice, platform models tend to work best when teams already have a baseline understanding of reliability and are willing to take ownership.

Where the Friction Appears

None of these models fail immediately. In fact, each tends to feel like the right solution at the moment it is introduced. The friction shows up later, usually in subtle ways.

Embedded SREs start strong but become harder to scale. Centralised teams bring order but risk creating distance. Platform approaches promise efficiency but depend on a level of maturity that not every team has reached.

These are not flaws in execution; they are structural limits. The model that worked at one stage begins to constrain the next.

How Needs Change with Growth

In smaller organisations, speed and learning tend to matter more than consistency. Embedding SREs into teams supports both. It allows reliability practices to develop organically and keeps feedback loops short.

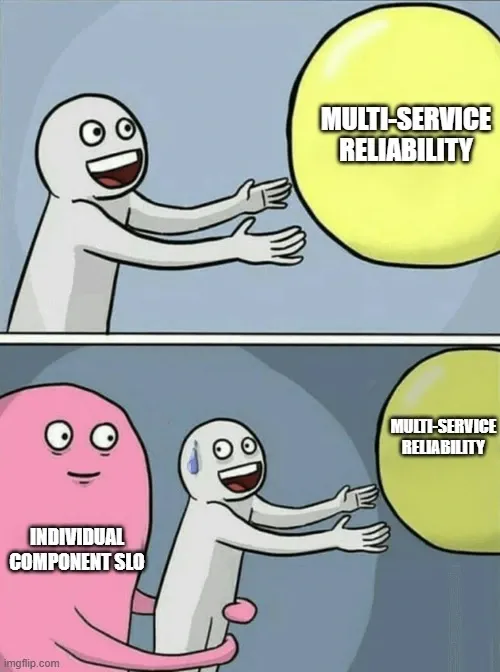

As the number of teams increases, coordination becomes more difficult. At this stage, a purely embedded approach often struggles to keep up, and some level of centralisation or shared structure starts to appear. Teams look for common standards, shared tooling, and clearer ownership.

In larger environments, where dozens of teams operate across complex systems, the emphasis shifts again. Scaling reliability through people alone becomes impractical. Platform capabilities—automated pipelines, standardised observability, reusable SLO frameworks—become essential.

What changes across these stages is not just the model, but the role reliability plays. It moves from being something teams learn, to something they coordinate and eventually to something they are expected to manage independently.

Why Hybrid Approaches Tend to Win

In practice, most organisations settle somewhere between these models.

A common pattern combines platform capabilities with selective embedding. Platform teams build the foundation—tooling, standards, automation—while embedded SREs help teams adopt and apply it in real contexts. This creates a useful feedback loop: recurring issues identified in teams can be addressed at the platform level, reducing duplication of effort.

Another variation keeps a centralised SRE function for governance and incident management, while relying on platform systems for day-to-day reliability work. This approach often fits environments where consistency and auditability are critical.

Some teams also experiment with rotating SREs between product groups. This spreads knowledge more evenly and reduces long-term dependency on specific individuals, although it comes with its own trade-offs around continuity and context.

Knowing When to Move On

One of the more difficult decisions is recognising when a model that once worked well is starting to hold things back.

With embedded SRE, the signs often appear in how teams behave rather than in metrics alone. If reliability improvements disappear once the SRE steps away, or if teams defer decisions instead of developing their own judgment, the model may be creating dependency rather than capability.

Another signal is repetition. When similar issues surface across multiple teams despite having SRE support, it usually points to a need for shared solutions rather than localised fixes.

At that point, the focus shifts. The question is no longer how to help individual teams solve problems, but how to reduce the likelihood of those problems appearing in the first place.

Measuring What Actually Scales

Understanding whether a model continues to work requires looking beyond surface-level indicators.

Platforms like Agile Analytics make it possible to track patterns that reveal how reliability evolves across teams. Instead of focusing on isolated metrics, they highlight trends—incident recurrence, shifts in error budget consumption, or changes in delivery speed following reliability improvements.

These patterns tell a more important story. They show whether progress is tied to specific individuals or embedded into the system itself. The distinction matters because only the latter can scale.

No Single Model Solves It All

There is a tendency to look for a definitive answer—a model that can be adopted and left in place. In reality, reliability evolves alongside the organisation.

Embedding helps teams build intuition. Centralisation introduces structure. Platform approaches extend that structure across scale. Each stage solves a different problem, and none of them remains optimal indefinitely.

What tends to separate teams that struggle from those that adapt is not the initial choice, but the willingness to revisit it.

Supercharge your Software Delivery!

Implement DevOps with Agile Analytics

Implement Site Reliability with Agile Analytics

Implement Service Level Objectives with Agile Analytics

Implement DORA Metrics with Agile Analytics