Kubernetes is no Silver Bullet

Published on March 2022 by Arjan Franzen

inventing docker

Why Docker?

At ZEN Software we see docker as a Configuration Management innovation. Docker solves a number of frustrating developer problems. Docker improves both the productivity and reliability of a software system.

Docker does this by:

- Removing all the dependency nightmares that cause production environments to be different from development environments.

- Increasing the efficiency of packaging and deployment (both in terms of size and time)

- Improves developer productivity — especially the inner loop of Edit -> Build -> Debug

All excellent goals to achieve, and largely accomplished.

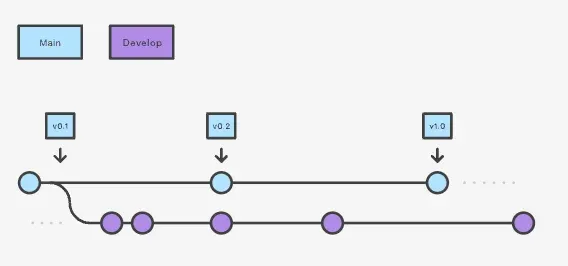

Single Box

But docker is essentially a single box solution focussing on running a single process and preventing all configuration management nightmares coming from running a process.

The ‘fun’ starts when you need to restart or run many containers to cope with a high load.

Enter….. Kubernetes, the silver bullet for Docker. Right? 😉

Kubernetes is great! (and complex)

Kubernetes is the rightful winner of the Container Orchestration competition (Diego cluster, Docker Swarm). Today it is the industry standard for orchestrating docker containers and can be used as a managed service at all hyper-scale clouds.

Should you, therefore, standardise on Kubernetes when running a container? We’d like you to reconsider such a policy as Kubernetes has many features that allow a high amount of flexibility without much return for smaller workloads. Think Mesh networks, Cluster Failover, Ingress controllers.

In order for you to ‘just run your container’ in a secure production fashion: Google and Amazon provide a number of great services:

Amazon’s AWS

- AWS Proton

- AWS App Runner

Google’s GCP

- Cloud Run

- App Engine

All the above services let DevOps teams focus on delivering software and take care of a lot of plumbing work related to running containers. The mentioned Platform as a Service (PaaS) services can serve containers to the public internet complete with Web Application Firewall (WAF) and Automated Certificates for HTTPS.

Supercharge your Software Delivery!

Implement DevOps with Agile Analytics

Implement Site Reliability with Agile Analytics

Implement Service Level Objectives with Agile Analytics

Implement DORA Metrics with Agile Analytics